Deep Sky Modeling for Single Image Outdoor Lighting Estimation — Supplementary Material

The purpose of this document is to extend the dataset and results presented in the main paper and provide additional analysis of our proposed model. Specifically, examples of our panorama dataset as well as more results on lighting estimation insertion are provided. Furthermore, we provide the images used for our user study. Lastly, we analyze our model by showing two experiments to demonstrate the generalization capabilities of our proposed model, first on sky in-filling and then semantic mapping.

Table of Contents

- Evaluation dataset (complements sec. 6.1)

- Supplementary lighting estimation results (extends fig. 7)

- User Study Images (complements table 2)

- Sky In-Filling

- Semantic Mapping

1. Evaluation dataset

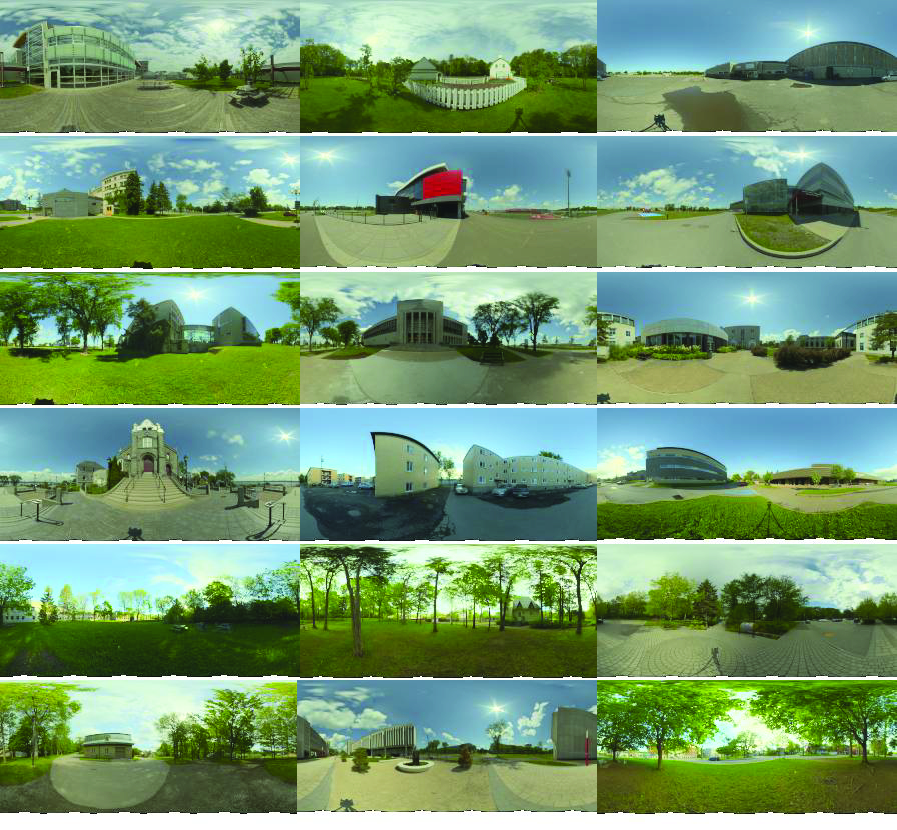

In this section, we present some panoramas from our evaluation dataset presented in sec. 6.1.

Figure 1.1: Subset of our 206-panorama evaluation dataset. Each panorama was tonemapped using the Reinhard tonemapping operator with a gamma for display.

2. Lighting estimations

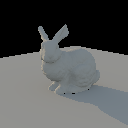

This section presents additional lighting estimations, extending the results shown in fig. 7.

3. User study images

We now present the images used for our user study (sec. 6.4). The bird inserted using the lighting estimated by our method is highlighted in green.

4. Sky In-Filling

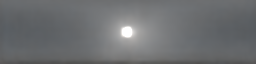

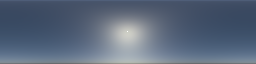

To ensure the robustness of our learned sky model to buildings and other sky occluders, we ask our sky model to reconstruct the sky appearance over the whole sky hemisphere during training, even when the input pixels are masked. We are now interested in our model's capability to extrapolate plausible lighting distributions in regions missing from the input panorama, effectively performing sky in-filling. From partially visible skies (fig. 4.1, left), we observe that our reconstruction yields plausible skies (right), suggesting our sky model understands plausible sky appearance.

Figure 4.1: Sky In-Filling examples. Given a sky with masked regions (left), our sky model fills the missing pixels and provides a plausible reconstruction of the entire sky with respect to the visible context. Panoramas were tonemapped for display.

5. Semantic Mapping

We further analyze our sky model by applying UMAP to the sky parameters z from our test set and show the reconstructed skies on the resulting coordinates on fig. 5.1. This mapping suggests that our sky model understands some high-level semantics about the sky as we see some clear trends such as sun elevation and intensity.

Figure 5.1: Diverse sky reconstructions shown in function of the sky latent representation z of our proposed method using UMAP to reduce dimensionality from 64 to 2. This map suggests our sky model understands high-level semantics about the lighting like sun elevation (vertical axis) and cloudiness (horizontal axis).