Ours

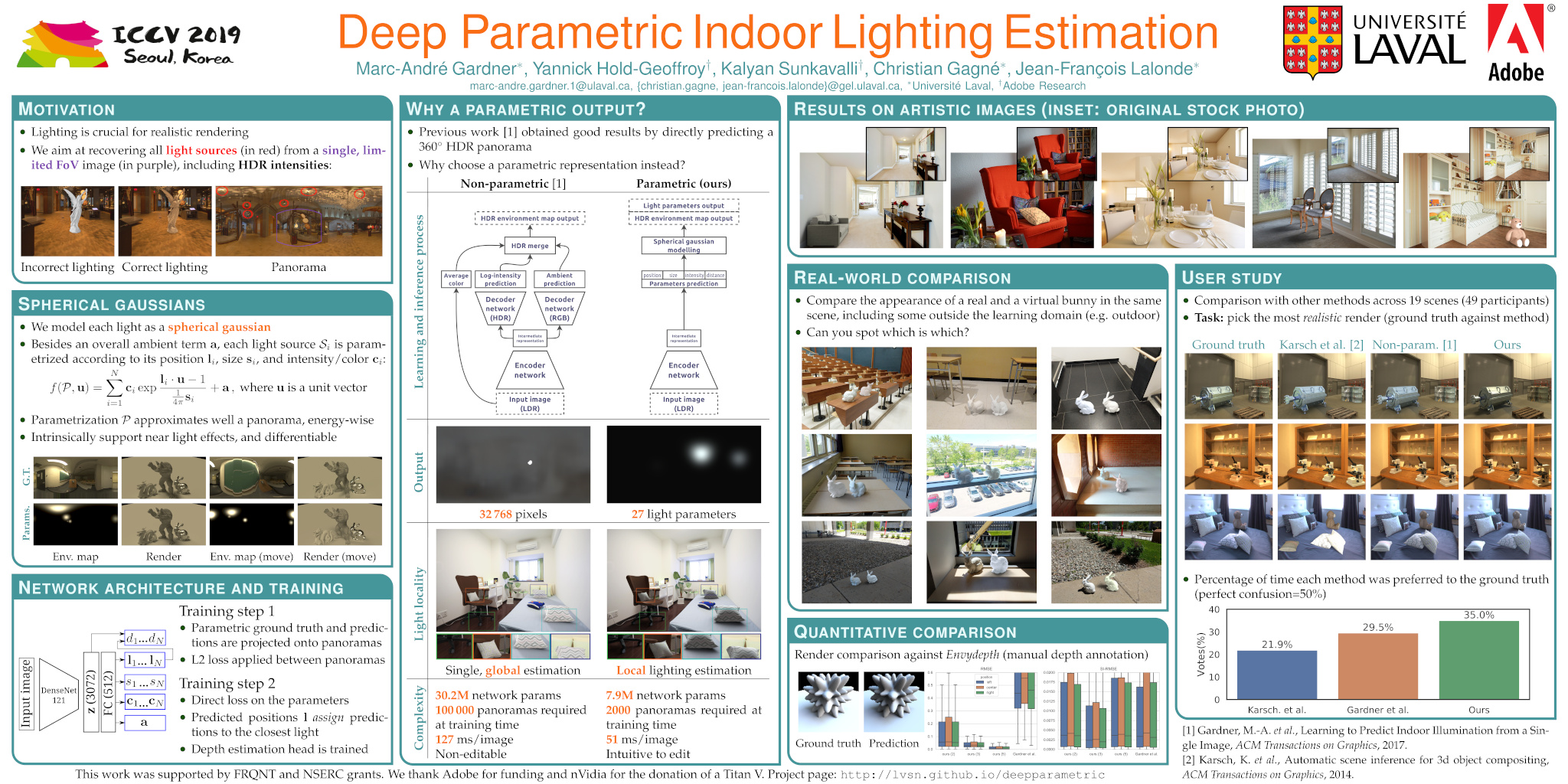

We present a method to estimate lighting from a single image of an indoor scene. Previous work has used an environment map representation that does not account for the localized nature of indoor lighting. Instead, we represent lighting as a set of discrete 3D lights with geometric and photometric parameters. We train a deep neural network to regress these parameters from a single image, on a dataset of environment maps annotated with depth. We propose a differentiable layer to convert these parameters to an environment map to compute our loss; this bypasses the challenge of establishing correspondences between estimated and ground truth lights. We demonstrate, via quantitative and qualitative evaluations, that our representation and training scheme lead to more accurate results compared to previous work, while allowing for more realistic 3D object compositing with spatially-varying lighting.

Paper

Marc-André Gardner, Yannick Hold-Geoffroy, Kalyan Sunkavalli, Christian Gagné, and Jean-François LalondeDeep Parametric Indoor Lighting Estimation

International Conference on Computer Vision (ICCV), 2019

[arXiv version] [CVF] [BibTeX]

Dataset

The dataset used is the Laval Indoor HDR Database. We processed around 1600 panoramas using Envydepth in order to obtain panoramic depth images. These depth images (PFM format) can be downloaded here. The depth dataset is provided free of charge, but notice you still need a valid license to use the Laval Indoor HDR Database (see this page for more details). Also, note that the depth annotations are not available for all the HDR panoramas, but 1540 of them.

Results for comparison

We provide results of this technique (with 1 and 3 lights) applied on 2240 test images from the Laval Indoor HDR Dataset. Please follow this link for more details.

For more results check out the [Supplementary material]

Video

Video outlining the benefits of the proposed methods in terms of spatially-varying lighting support and user editable properties.Results on stock photos

Click on any image to enlarge.Acknowledgements

- Adobe System inc with generous gift funding;

- NSERC Discovery Grant RGPIN-2014-05314;

- NVIDIA Corporation with the donation of the Titan X and Titan V GPUs used for this research;

- MITACS, Prompt-Québec and E Machine Learning.