Ours

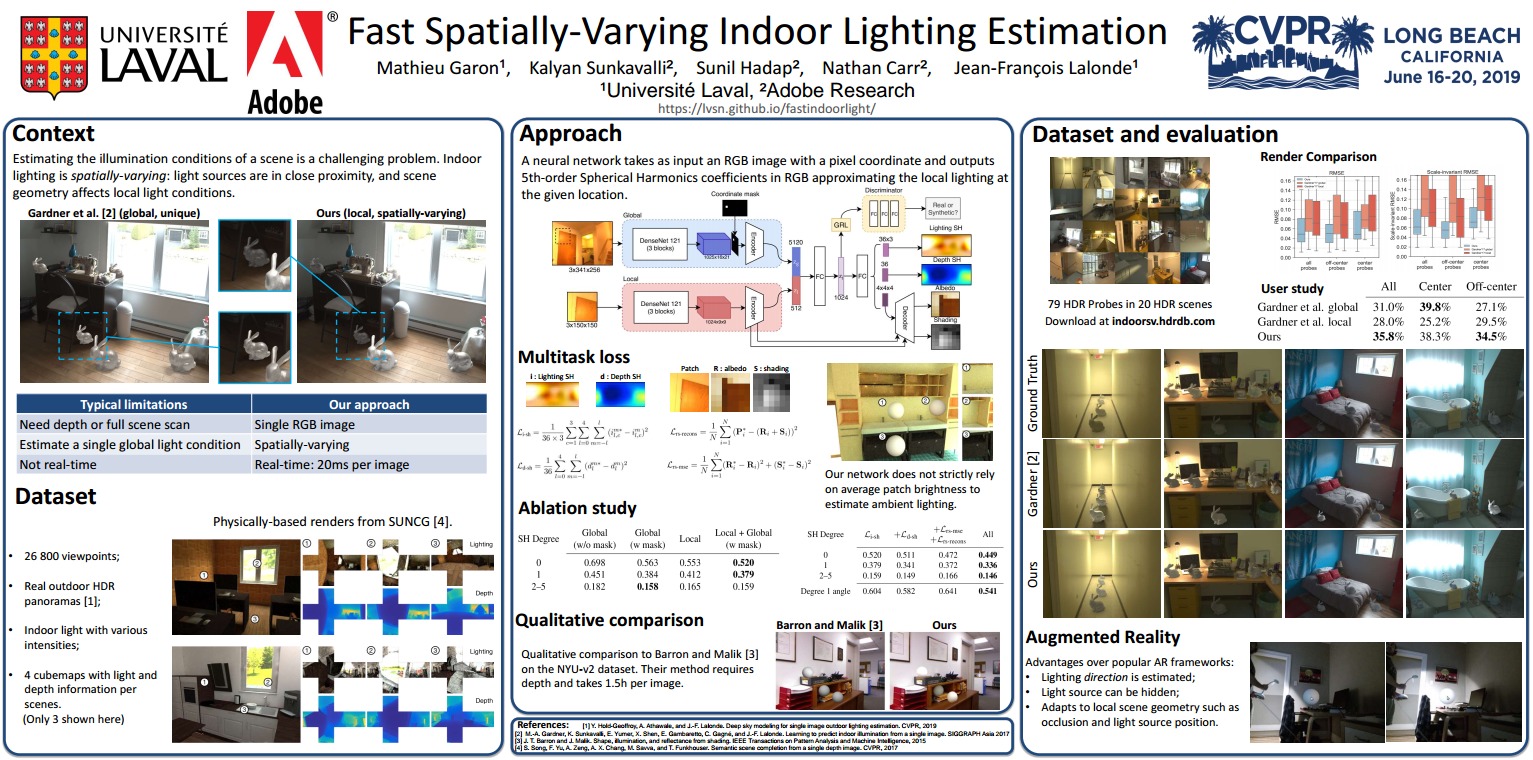

We propose a real-time method to estimate spatiallyvarying indoor lighting from a single RGB image. Given an image and a 2D location in that image, our CNN estimates a 5th order spherical harmonic representation of the lighting at the given location in less than 20ms on a laptop mobile graphics card. While existing approaches estimate a single, global lighting representation or require depth as input, our method reasons about local lighting without requiring any geometry information. We demonstrate, through quantitative experiments including a user study, that our results achieve lower lighting estimation errors and are preferred by users over the state-of-the-art. Our approach can be used directly for augmented reality applications, where a virtual object is relit realistically at any position in the scene in real-time.

Paper

Mathieu Garon, Kalyan Sunkavalli, Sunil Hadap, Nathan Carr and Jean-François LalondeFast Spatially-Varying Indoor Lighting Estimation

Conference on Computer Vision and Pattern Recognition (CVPR), 2019

[arXiv:1906.03799 pre-print] [CVF] [BibTeX]

Results for comparison

We provide results of this technique applied on 2240 test images from the Laval Indoor HDR Dataset. Please follow this link for more details.

Slides

For more results check out the [Supplementary material]

Images

Videos

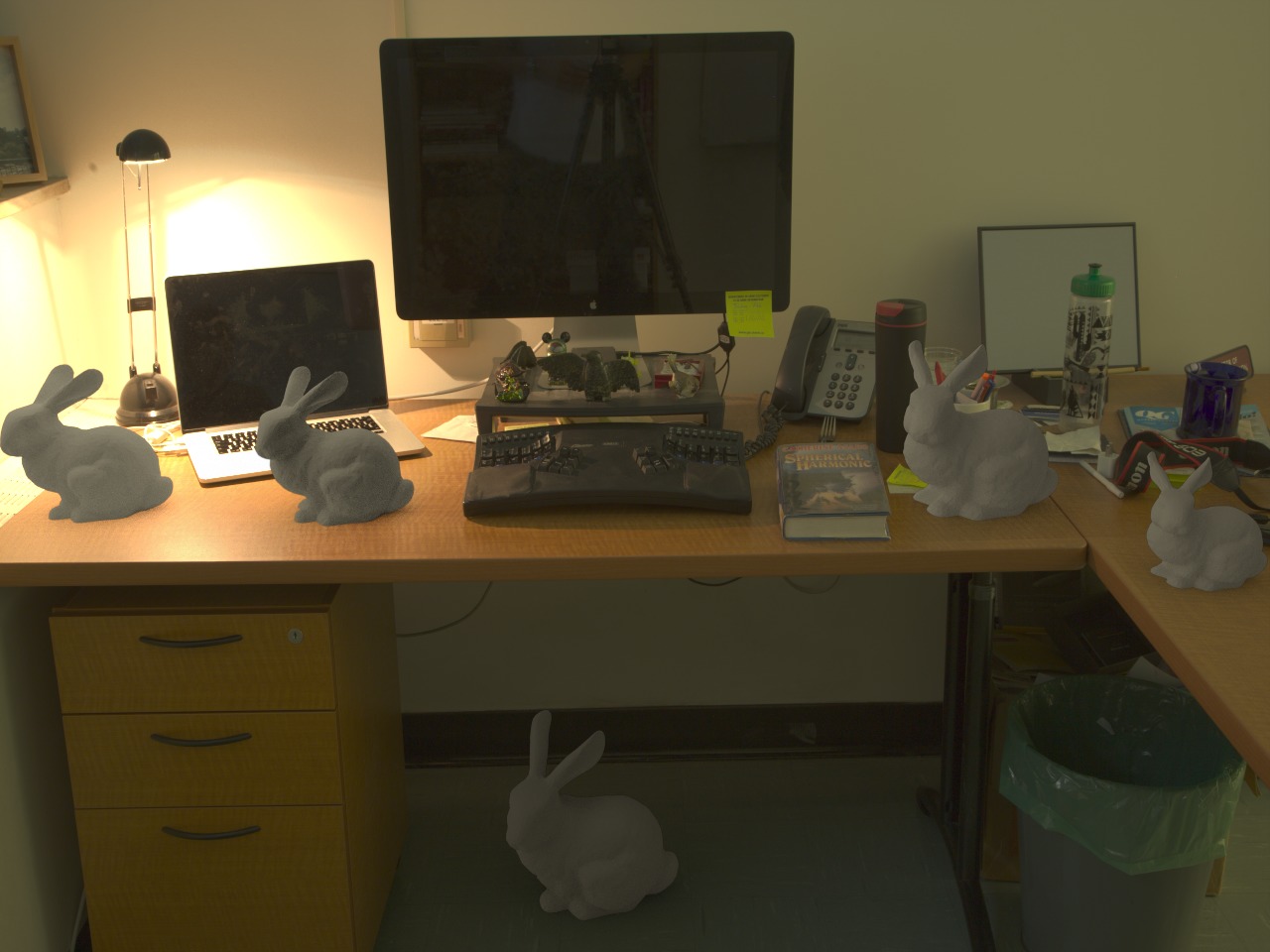

The position of the bunny and the RGB image is used as input to the neural network and the prediction is used to light the bunny in real-time.Note that depth is only used to scale the 3D model.

Synthetic

Real

Interaction

Acknowledgements

- Adobe System inc with generous gift funding;

- NSERC/Creaform Industrial Research Chair on 3D Scanning: CREATION 3D for the funding;

- NVIDIA Corporation with the donation of the Tesla K40 and Titan X GPUs used for this research.