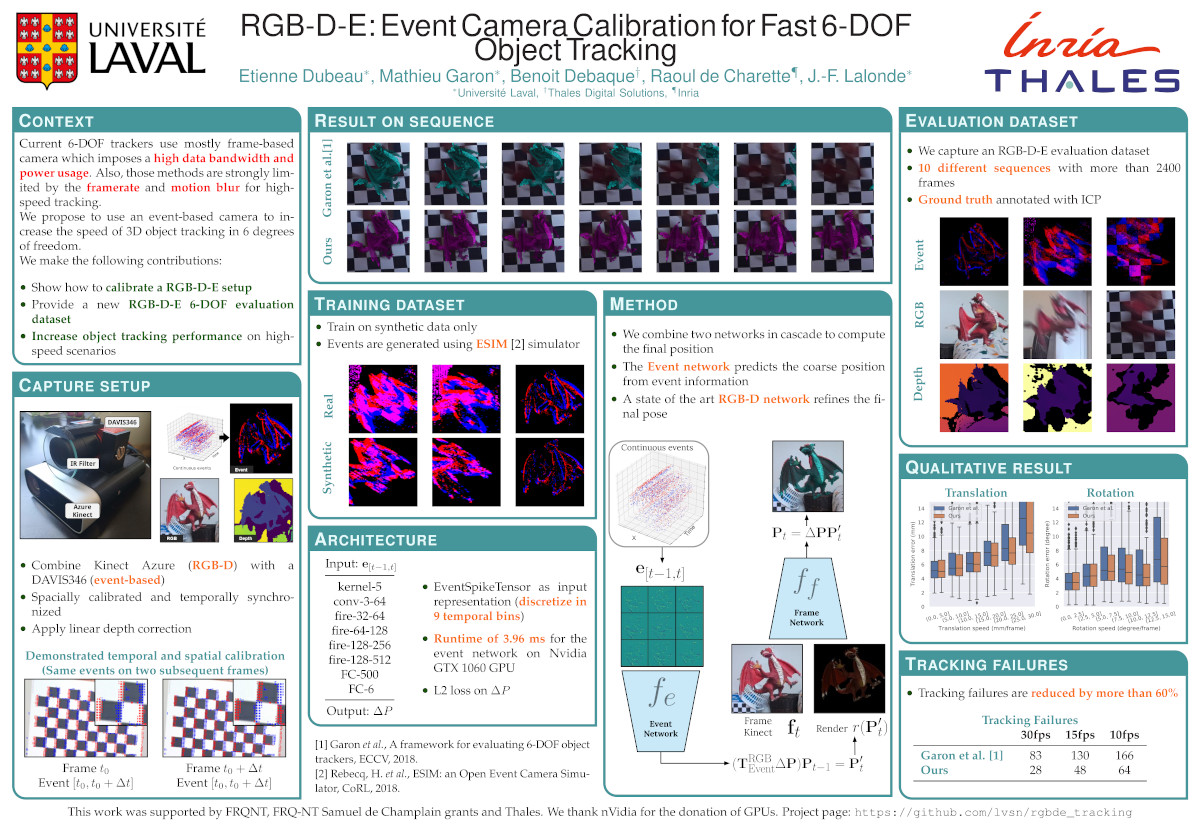

In this paper, we propose, for the first time, to use an event-based camera to increase the speed of 3D object tracking in 6 degrees of freedom. This application requires handling very high object speed to convey compelling AR experiences. To this end, we propose a new system which combines a recent RGB-D sensor (Kinect Azure) with an event camera (DAVIS346). We develop a deep learning approach, which combines an existing RGB-D network along with a novel event-based network in a cascade fashion, and demonstrate that our approach significantly improves the robustness of a state-of-the-art frame-based 6-DOF object tracker using our RGB-D-E pipeline.

Paper

Etienne Dubeau, Mathieu Garon, Benoit Debaque, Raoul de Charette and Jean-François LalondeRGB-D-E: Event Camera Calibration for Fast 6-DOF Object Tracking

In Symposium on Mixed and Augmented Reality (ISMAR) 2020

[arXiv:2006.05011 pre-print] [BibTeX]

Acknowledgements

- Jérémie Roy for his help with data acquision;

- FRQ-NT Samuel de Champlain grant, the NSERC CRDPJ 524235 - 18 grant, and Thales for the funding;

- NVIDIA Corporation with the donation of the GPUs used for this research.